Deep Learning

Deep Learning

In the following, we describe briefly deep learning. Our results are explained in more detail here.

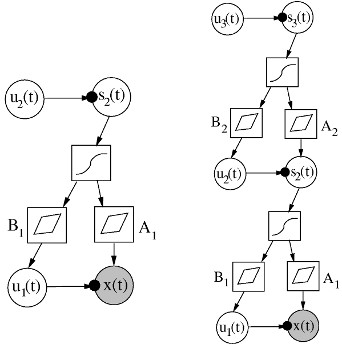

Deep learning is an area of machine learning research that concentrates on finding hierarchical representations of data, starting from observations towards more and more abstract representations. We presented some early work in that direction in (Valpola et al., 2001, Raiko et al., 2007) using Variational Bayesian learning in directed graphical models (see figure).

Nowadays, a typical building block of deep networks is restricted Boltzmann machine (RBM). It is based on undirected connections between the visible and the hidden layer each consisting of a binary vector. Learning of such models has been rather cumbersome, but we have proposed several improvements to the learning algorithm in (Cho et al. 2010, Cho et al. 2011a) that make the algorithm stable and robust against learning parameters. Gaussian-Bernoulli restricted Boltzmann machine (GRBM) is a version of RBM for continuous-valued data. We have improved its learning algorithm in (Cho et al. 2011b). We have published software packages implementing our new algorithms. The improvements are also applicable to deep models (Cho et al. 2011c).

It is also possible to use multilayer perceptron (MLP) networks as auto-encoders for finding hierarchical representations of data. In an auto-encoder MLP network, the input and output vectors are the same, and the network includes a middle bottleneck layer with lower dimensionality. Learning of such models has been difficult or impossible, but in (Raiko et al. 2012) we present transformations that make the optimization problem much easier.

There are also two master's theses written on the topic:

- Cho, KyungHyun. Improved Learning Methods for Restricted Boltzmann Machines. Master's Thesis, Aalto University, 2011.

- Calandra, Roberto. An Exploration of Deep Belief Networks toward Adaptive Learning. Master's Thesis, Aalto University, 2011.

References

T. Raiko, H. Valpola, and Y. LeCun.

Deep Learning Made Easier by Linear Transformations in Perceptrons.

In Proc. of the 15th Int. Conf. on Artificial Intelligence and Statistics (AISTATS 2012), JMLR W&CP, volume 22, pp. 924-932, La Palma, Canary Islands, April 21-23, 2012.

K. Cho, T. Raiko, and A. Ilin

Gaussian-Bernoulli Deep Boltzmann Machine.

In the NIPS 2011 Workshop on Deep Learning and Unsupervised Feature Learning, Granada, Spain, December 16, 2011c.

K. Cho, A. Ilin, and T. Raiko.

Improved Learning of Gaussian-Bernoulli Restricted Boltzmann Machines.

In Lecture Notes in Computer Science, Volume 6791, Artificial Neural Networks and Machine Learning - ICANN 2011, pp. 10-17, Espoo, Finland, June 14-17, 2011b.

K. Cho, T. Raiko, and A. Ilin.

Enhanced Gradient and Adaptive Learning Rate for Training Restricted Boltzmann Machines.

In the proceedings of the International Conference on Machine Learning (ICML 2011), Bellevue, Washington, USA, June 28-July 2, 2011a.

K. Cho, T. Raiko, and A. Ilin.

Parallel Tempering is Efficient for Learning Restricted Boltzmann Machines.

In the proceedings of the International Joint Conference on Neural Networks (IJCNN 2010), Barcelona, Spain, 18-23 July, 2010.

T. Raiko, H. Valpola, M. Harva, and J. Karhunen.

Building Blocks for Variational Bayesian Learning of Latent Variable Models.

In the Journal of Machine Learning Research (JMLR), volume 8, pages 155-201, January 2007.

H. Valpola, T. Raiko, and J. Karhunen.

Building blocks for hierarchical latent variable models.

In the proceedings of the 3rd International Conference on Independent Component Analysis and Blind Signal Separation, ICA 2001, pp. 716-721, San Diego, California, USA, December 9-12, 2001.